An overwhelming majority (97%) of compliance professionals in Ireland’s financial services industry believe that criminals are exploiting AI faster than regulators and firms can respond. And almost one in three (31%) believe firms using AI tools should bear ultimate accountability for AI-generated errors, while only one in ten (11%) believe that vendors developing the technology should take the flak.

This is according to the findings of a nationwide survey by the Compliance Institute of over 150 senior compliance professionals working across Irish financial services organisations.

Headline findings from the Compliance Institute survey reveal that:

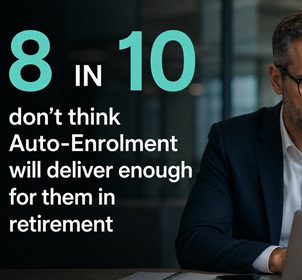

- More than three in four (77%) compliance professionals believe that regulators and firms are behind the curve when it comes to the exploitation of AI by criminals.

- One in five (20%) experts feel that while criminals are “somewhat” exploiting AI faster than regulators and firms can respond, “the gap is closing” (see Table 1 in Appendix).

- Only a tiny minority (3%) of those surveyed believe that current safeguards are keeping pace with the criminal exploitation of AI.

Commenting on the findings of the survey, Michael Kavanagh, CEO of the Compliance Institute said:

“AI, a technology which has developed at a rapid pace in recent years, is now being increasingly used by scammers in their attempts to defraud people. Unfortunately, cybercrime is developing and advancing at such a pace that organisations and legislators are struggling to keep up, and this includes the increased exploitation of AI by criminals. It is worrying that an overwhelming majority of compliance professionals believe that criminals are exploiting AI faster than regulators and firms can respond.

Whether corporate Ireland likes it or not, AI is part of – and is changing - our workforce, and the risks that come with that need to be managed. Strong regulation of AI is important and regulators have a key role to play here. But equally, firms need to take steps themselves to ensure they stay ahead of the risks posed by AI. Staff training in and awareness of AI is crucial - such training should not only equip staff with the know-how around how to make the most of AI, it should also help staff protect their business against the risks of, and possible fallouts from, AI.”

The Compliance Institute survey also asked compliance professionals who they believed should bear ultimate responsibility in the event of AI-generated errors – such as false money laundering alarms (where a transaction or customer is mistakenly flagged as suspicious by an anti-money laundering system, even though it is legitimate) or biased monitoring (where discrimination may be embedded in AI systems, resulting in unfair, or harmful results). Highlights from this element of the research reveal that:

- Almost one in three (31%) believe the user of the AI tools should bear ultimate accountability for AI-generated errors, while one in ten (11%) believe that vendors developing the technology should take the flak. Only 3% believe that regulators should bear ultimate responsibility for such errors.

- More than half (55%) of compliance professionals believe responsibility for such errors should be shared amongst all stakeholders (firms, vendors and regulators).

- Mr Kavanagh explained:

“The fallout from AI-generated errors can be immense for companies. Not only could companies face substantial costs following an AI error, their reputation could also be at stake. The rise of AI brings opportunities and challenges for companies. AI, if used correctly, can improve productivity and efficiency in an organisation. However, over-reliance or mis-use of the technology could have severe repercussions for firms, including significant data privacy breaches, reputational damage, legal liabilities and financial penalties. All companies and businesses need to mindful of this when using AI.”

The findings of the Compliance Institute survey emerge shortly after Banking & Payments Federation Ireland (BPFI) warned that there has been a marked increase in adverts featuring fake AI-generated endorsements from well-regarded and trusted celebrities[1]. As these adverts can be difficult to recognise as fake, an increasing number of people have fallen victim to such scams. Gardaí reported a 21pc increase in investment scams in the three months up to October 2025.

Mr Kavanagh commented:

“False and misleading social media ads can have major repercussions for consumers. These include financial loss if purchasing low quality or non-existent products and health risks if purchasing unregulated health products which could turn out to be dangerous.

Fraudsters are able to use various technology – including AI - to identify and target people as well as to extract the information they need to steal from someone. The growing sophistication of fraudsters means scams have become harder to spot, and therefore easier to fall for.

Scams and cybercrime can have catastrophic consequences not just for those whom they are perpetrated against, but for the wider public. We only have to look at the widespread disruption following the 2021 hacking of the HSE to understand the severity of the crimes. It’s incumbent on us all – consumers, businesses and regulators alike - to educate ourselves so that we can protect ourselves as much as possible from the dangers of cybercrime

[1] As per BPFI release Investment scams alert following marked increase in AI-generated adverts